Caching in Travel: Why Standard Strategies Break

Caching is supposed to be simple.

Store frequently accessed data. Return it fast. Refresh periodically. Standard practice in every web application.

Then you try to apply it to travel APIs, and everything breaks.

Why Standard Caching Fails in Travel

Most web applications cache data that changes slowly.

Product catalogs update daily. User profiles change when users edit them. News articles are static once published.

Standard caching works because the data has predictable update patterns and low consequence for being slightly stale.

Travel data is different.

Hotel prices change every few minutes. A rate that was $200 at 10:00 AM might be $185 at 10:15 AM — or sold out entirely.

Availability shifts in real-time. The room you showed a user 30 seconds ago might not exist anymore. Someone else booked it.

Stale data has high consequence. Show a user a $150 rate, they click through, and suddenly it's $180 at checkout. They abandon. Or worse, they complete the booking and dispute the charge.

Standard caching strategies — cache for 5 minutes, cache for an hour, cache until manually invalidated — don't account for this.

The Tradeoffs Platforms Face

Cache too long: Users see stale prices. Availability shows rooms that are sold out. Bookings fail because cached data doesn't match reality. Conversion drops. Support costs spike.

Cache too short: Every search hits live supplier APIs. Response times increase from sub-2-seconds to 5+ seconds. Supplier rate limits get hit. Infrastructure costs increase. User experience degrades.

Don't cache at all: Infrastructure can't handle the load. Searches slow to a crawl during peak times. Suppliers throttle your requests. Costs become unsustainable.

Most platforms pick one of these bad options, then spend months trying to optimize their way out of the tradeoff.

The Search vs Booking Problem

The complexity compounds because caching needs differ by use case.

Search results can tolerate some staleness. If a price is 2% off when a user first sees it, they probably won't notice. The goal is speed — show results fast, let users filter and compare.

Booking flow cannot tolerate staleness. When a user clicks "Book Now," the price and availability must be exact. No surprises at checkout. No failures because cached data was wrong.

Platforms that use the same caching strategy for both end up with slow searches or unreliable bookings.

What Content vs Pricing Requires

Not all travel data changes at the same rate.

Hotel content — name, address, description, images, amenities — changes rarely. Maybe once a month, or when a property updates its listing. This can be cached aggressively.

Pricing and availability — changes every few minutes. Cannot be cached the same way.

Platforms that cache everything with the same TTL (time-to-live) waste resources refreshing data that hasn't changed, or serve stale data that's already wrong.

The strategy needs to be layered: static content cached long, dynamic data cached short or not at all.

Supplier Variability Makes It Worse

Different suppliers have different update frequencies.

Supplier A updates rates every 5 minutes. Supplier B updates every hour. Supplier C has real-time availability but batch-updates pricing twice a day.

A single caching strategy can't optimize for all of them.

Cache Supplier A's data for 5 minutes and you're serving stale data 80% of the time. Cache Supplier C's availability in real-time but pricing for 12 hours, and you're making unnecessary API calls for availability while showing outdated prices.

Platforms either over-cache (stale data, booking failures) or under-cache (slow performance, high costs).

The Peak Load Problem

Caching isn't just about speed. It's about surviving peak load.

Black Friday. Holiday weekends. Flash sales. Sudden traffic spikes from marketing campaigns.

When traffic doubles in an hour, platforms that rely on live API calls for everything get throttled by suppliers, hit rate limits, or simply can't respond fast enough.

Caching is the buffer that lets platforms handle peak load without degrading. But only if it's done right.

Naive caching (cache everything for 10 minutes) doesn't help during peaks because the cache keeps expiring right when you need it most.

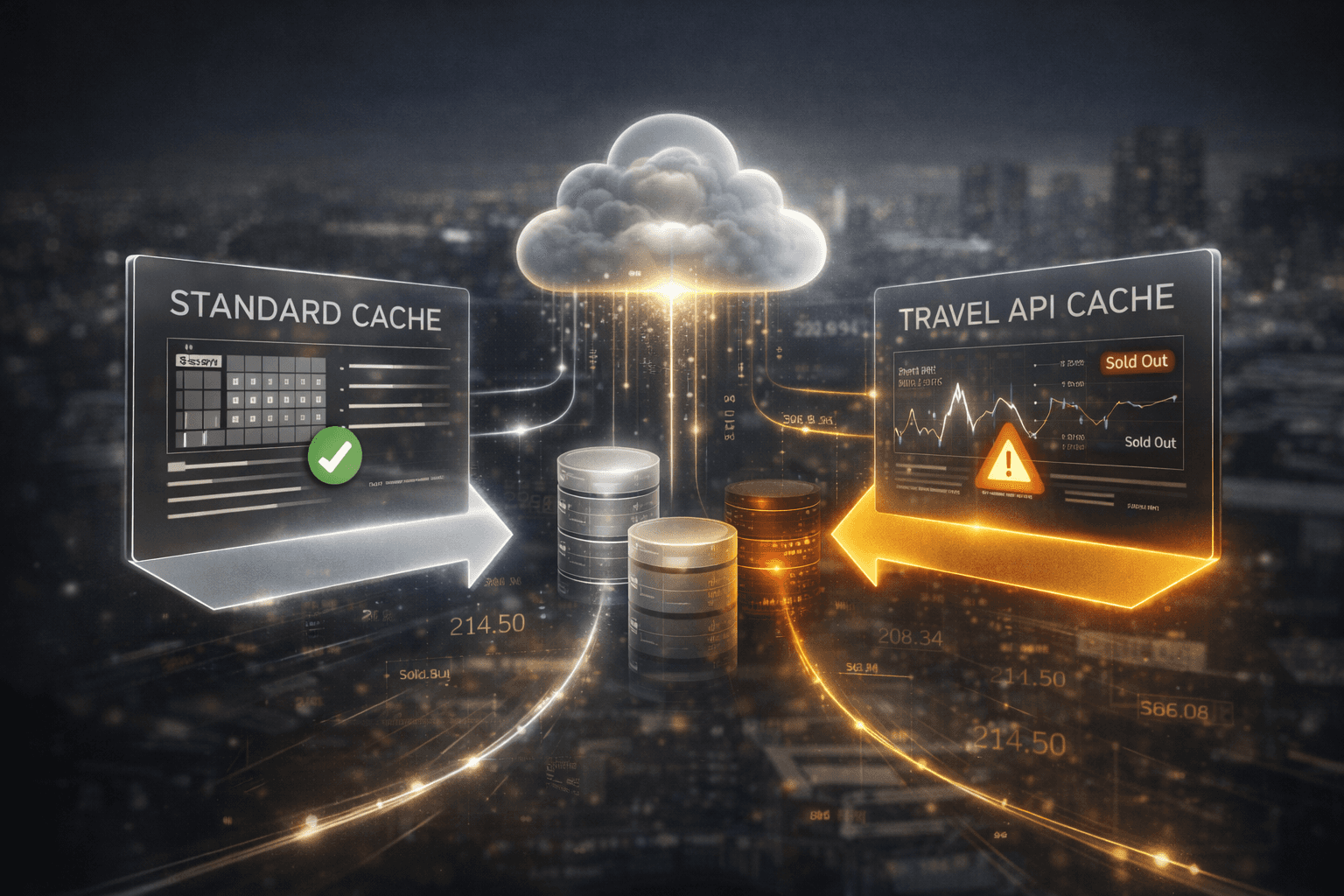

What Actually Works

Smart caching in travel isn't about one strategy. It's about layered strategies based on data type and use case.

Static content: Cache aggressively. Hotel names, addresses, descriptions, images. Refresh daily or when suppliers signal an update.

Search results: Cache with short TTLs (30-90 seconds) and use cached data as a baseline while triggering background refreshes. Show results fast, update in real-time if prices change significantly.

Booking flow: Don't cache. Always query live. Price and availability must be exact at the moment of booking.

Availability checks: Use real-time data with intelligent fallback. If a supplier times out, use last-known-good data with a warning flag rather than failing the entire search.

Deduplication mappings: Cache heavily. Hotel-to-hotel mappings across suppliers don't change often. These should be precomputed and cached long-term.

The strategy isn't "cache or don't cache." It's "cache what, for how long, and with what fallback logic."

Cache Invalidation: The Hardest Problem

The classic computer science joke: There are only two hard problems — naming things and cache invalidation.

In travel, cache invalidation is genuinely hard because suppliers don't tell you when data changes.

You don't get a webhook saying "Hotel X's rates just updated." You have to either:

Poll constantly (expensive, defeats the purpose of caching)

Use time-based expiration (risks stale data)

Accept occasional staleness and handle it gracefully (the pragmatic approach most successful platforms take)

The third option is what works. Cache with reasonable TTLs, but build booking flows that revalidate before confirming, show users updated prices if they changed, and handle edge cases gracefully when cached data doesn't match reality.

How We Think About It

At Volt, caching is layered by data type and use case, not by blanket TTLs.

Static content cached long-term. Search results cached with short TTLs and intelligent refresh logic. Booking flows query live with fallback strategies that don't break the experience when a supplier times out.

Deduplication and mapping data — the algorithms that match properties and rooms across suppliers — precomputed and cached heavily because they don't change and they're expensive to regenerate.

Platforms building on Volt get the benefit of this without having to architect it themselves. Fast search performance without stale booking data. Peak load handling without manual scaling. Intelligent fallbacks when suppliers go down.

Caching isn't something we bolted on. It's part of the infrastructure design.

What This Means for Platforms

If you're building your own infrastructure, budget for caching complexity. It's not a one-week project. It's an ongoing architecture challenge that affects performance, reliability, and cost.

If your current caching strategy is "cache everything for 10 minutes," you're either serving stale data or hitting suppliers more than you need to.

If you're not caching at all because it's "too complex," you're leaving performance and cost savings on the table — and your infrastructure won't handle peak load well.

The platforms that get caching right don't have faster servers or more budget. They have better architecture.

Standard caching strategies break in travel because travel data doesn't behave like standard web data.

The solution isn't to cache harder. It's to cache smarter.